Augmented and virtual reality: Exploring a future role in radiation oncology education and training

Images

SA-CME credits are available for this article here.

Abstract

Background: Recent advancements in computer-generated graphics have enabled new technologies such as augmented and virtual reality (AR/VR) to simulate and recreate realistic clinical environments. Their utility has been validated in integrated learning curriculums and surgical procedures. Radiation oncology has opportunities for AR/VR simulation in both training and clinical practice.

Methods: Systematic review was performed to query the literature based on a combination of the search terms “virtual,” “augmented,” “reality,” “medical student,” and “education” to find articles that examined AR/VR on learning anatomy and surgery-naïve participants’ first-time training of procedural tasks. Studies were excluded if nonstereoscopic VR was used, if they were not randomized controlled trials, or if resident-level participants were included.

Results: For learning anatomy and procedural tasks, the studies we found suggested that AR/VR was noninferior to current standards of practice.

Conclusions: These studies suggest that AR/VR programs are noninferior to standards of practice with regard to learning anatomy and training in procedural tasks. Radiation oncology, as a highly complex medical specialty, would benefit from the integration of AR/VR technologies, as they can be cost-effective methods of enhancing training in a field with a narrow therapeutic ratio.

Healthcare providers strive for cost-effective, easily accessible methods to train and practice medicine in this changing landscape. Virtual reality/Augmented reality (VR/AR) systems are readily available programs that can realistically simulate clinical environments. These immersive technologies are on a continuum of reality-virtuality.1 A real environment is the reality we live in and is filled with real objects. A virtual environment fills a display device with virtual objects.1 Everything between these two environments can be called mixed reality or extended reality (XR). One platform within XR is AR, in which a display device will overlay a digital image into the field of view of a real environment. Google Glass is considered a “nonimmersive” version of AR as it projects a computer monitor display into the upper right corner of a field of view. There are several factors to consider when assessing XR technology and several devices included within it that will not be discussed further in this paper. These platforms are typically used with either a head-mounted display (HMD) or a monitor-based display device.

The most basic VR programs remain “nonimmersive,” displaying traditional content, such as watching a movie on a computer screen. However, the most advanced VR programs try to emulate 3 sense-based modalities to provide a truly immersive environment: sight, sound and touch. The HMD-based devices use stereoscopic animations and surround sound, re-creating sight with depth perception and sound with distance localization.2 Haptic feedback, or touch sensation, is on the horizon as well.3-5

AR-based devices work with some form of optic modulation through a medium such as glasses, a smartphone, and possibly contact lenses in the future. Some of the simplest nonmedical AR uses include smartphone applications that use a smartphone’s gyroscope, internet connection and global positioning system (GPS) to triangulate and display astronomical constellations on the phone when pointing its camera lens to the night sky. Regardless of their level of immersion, one aim of these technologies is to help us see things that are difficult to visualize.

Previous iterations of immersive console experiences were unsophisticated with clunky, pixelated graphics; however, the latest graphic cards can produce photorealistic virtual environments.6,7 In medicine, this advantage can translate to simulating procedures requiring precision dexterity that can possibly harm a patient. The experience required to obtain deft procedural ability would previously have been at the expense of real patients. Our surgical colleagues have already noticed the utility of simulated environments using the daVinci Surgical Simulator (dVSS) (Intuitive Surgical Inc.; Sunnyvale, California),8,9 which is of particular interest to radiation oncology residency programs that train young physicians not only in external-beam techniques,10 but also in internal brachytherapy delivery.11 In radiation oncology practice, ensuring the safe delivery of implanted dose is of the highest significance due to the proximity of adjacent normal tissues and the potential of long-term radiation-induced late complications. Indeed, quality assurance programs in radiation oncology aim not only to ensure that the graduating physician possesses the technical ability to perform external-beam and brachytherapy delivery, but also that such competent skill is safely maintained over the lifetime of the practitioner.

The entire practice of radiation oncology is predicated on the individual practitioner’s successful deployment of specific technologies. From contouring anatomical structures, to creating dose angles for treatment, to the technical insertion of permanent radioactive seeds or temporary catheters for high dose rate (HDR) brachytherapy, opportunities for AR/VR technology integration are numerous.10,12-14 Clinical application of this new technology will be a challenge, as randomized controlled trials are needed to prevent unnecessary patient harm. A safer method of examining the utility of this technology in preliminary studies is by comparing noninferiority with traditional means of training.

The aim of this review is to determine whether AR/VR is a suitable surrogate for training clinically naïve radiation oncology healthcare practitioners. It is hypothesized that the main advantage of AR/VR’s immersive environment is that it helps healthcare professionals understand 3-dimensional (3D) visuospatial representations better, or at least equal to, traditional textbook learning. Therefore, this study sought to find articles in which visuospatial learning would be most utilized, in anatomy and simple procedures requiring the understanding of anatomy.

Methods and Materials

Search Strategy and Study Eligibility

An initial search in the literature for articles written in English on the use of AR/VR for educational use at the medical student level as a surrogate for the entry level radiation oncology resident was performed, dating from 1997 to 2017. Specifically, articles that dealt strictly with anatomy education and surgery-naïve procedural skills were sought. A combination of the terms “virtual reality,” “augmented reality,” “VR,” “AR,” “medical student,” and “education” were queried.

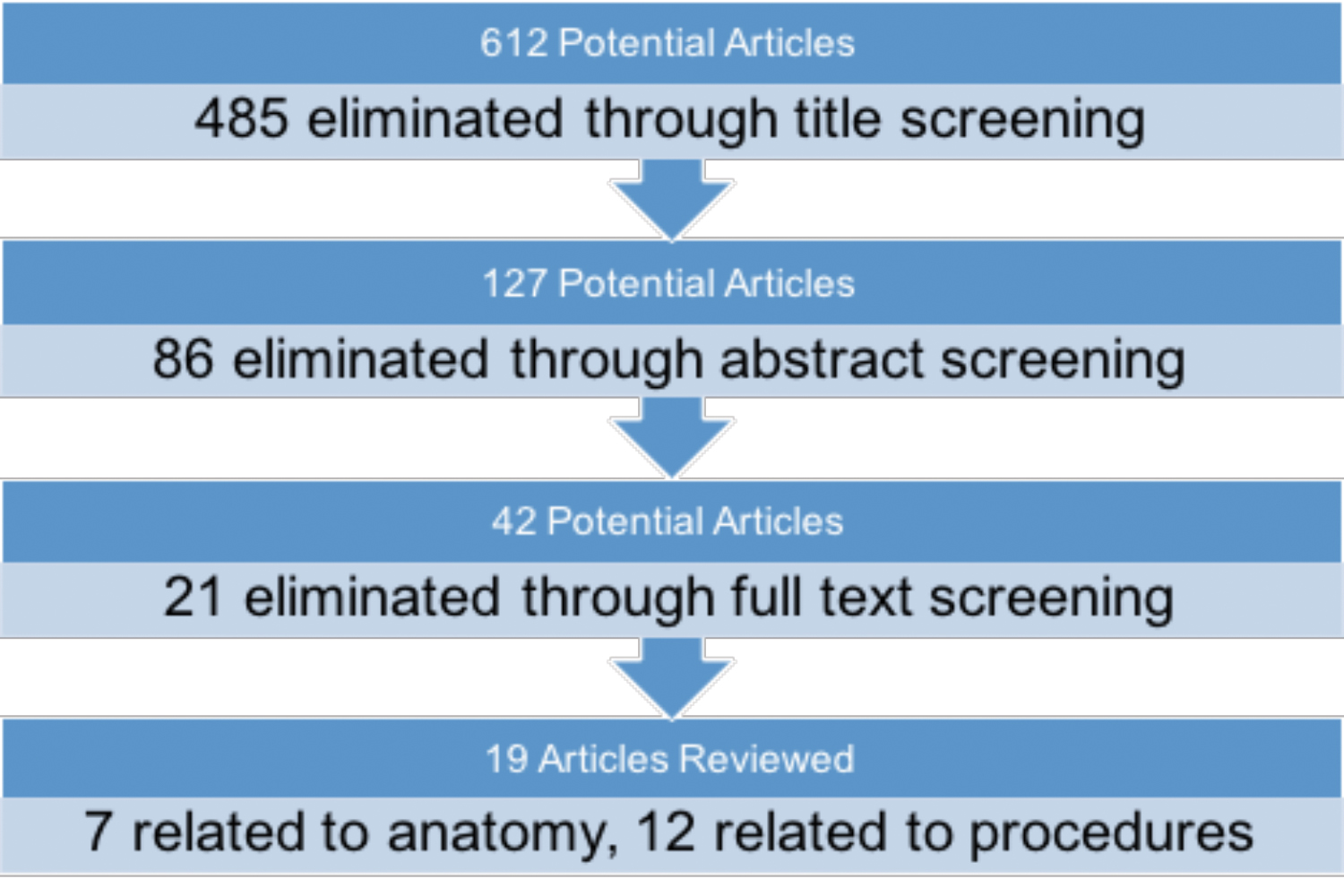

A diagrammatic flow chart of the search algorithm used is depicted in Figure 1. The initial search of the literature yielded 612 articles. After this initial screening of article titles, 127 were selected for abstract review. Among criteria for exclusion were the inclusion of resident-level anatomy topics or participants; use of nonstereoscopic 3D models; and trials that were not randomized and controlled, not adequately powered, or did not have the article available in text. Additionally, studies were excluded if they did not explicitly test for a procedural task in a randomized controlled trial. Finally, studies were excluded if they did not utilize a true stereoscopic virtual reality simulator or augmented reality if the final test was not a 2-dimensional (2D) laparoscopic procedure or if the articles were unavailable in text.

After eliminating 86 studies, 42 articles were reviewed in full text. Finally, 19 articles were left that met inclusion criteria and form the basis for this review. Meta-analysis was not performed due to heterogeneity in outcomes measured; controls; and randomized, controlled trial arms.

Results

Medical Student

Anatomy Education

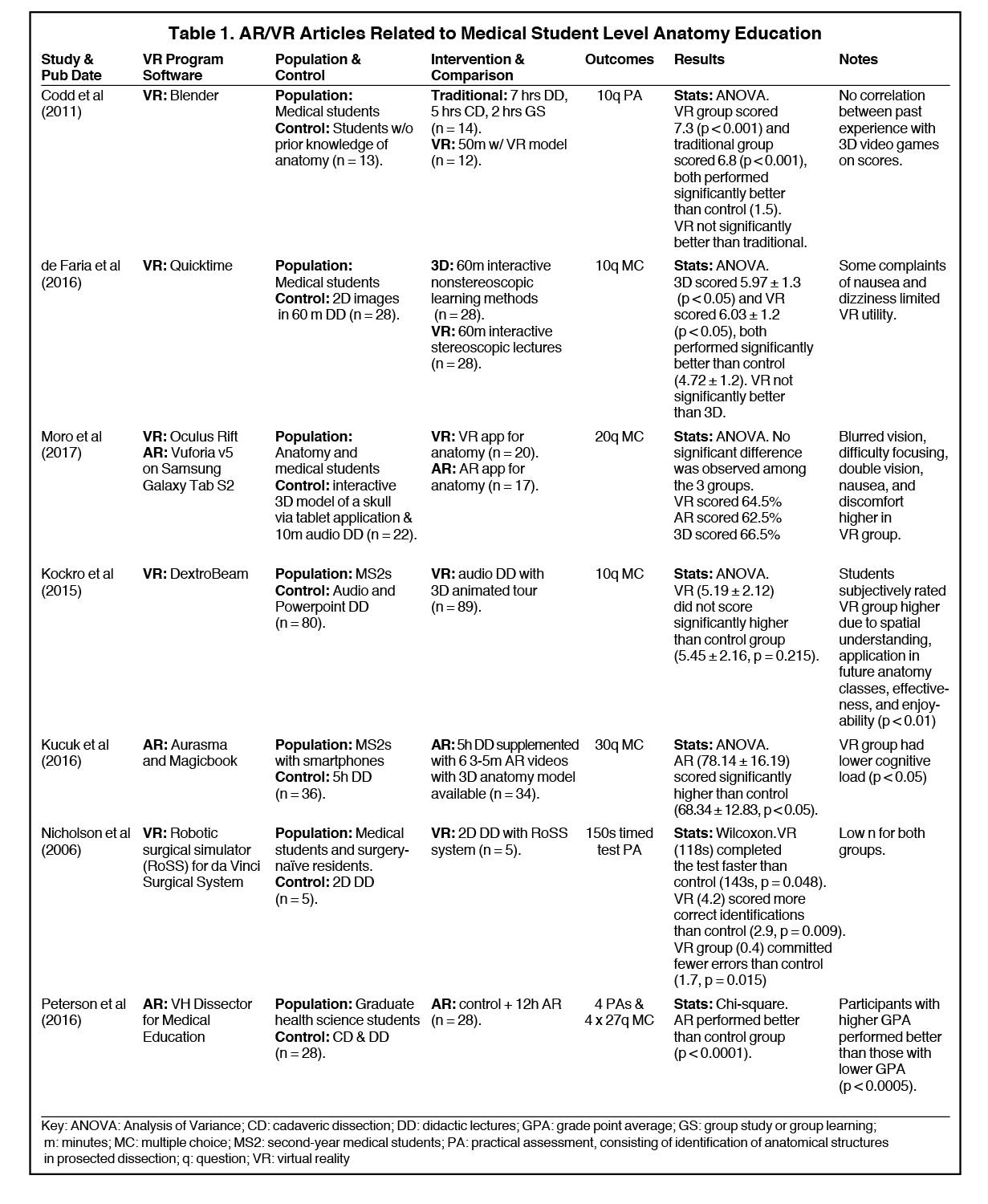

We identified 7 articles that used VR/AR to supplement anatomy courses at the pre-clerkship medical student level (Table 1).15-21 Most of the studies found that AR/VR did not significantly differ in standardized testing scores when compared with traditional anatomy lectures that included cadaveric dissection. A variety of VR programs were used, with no single study using the same program for anatomy teaching. Participants included first- and second-year medical students, with one study including graduate-level students taking a medical anatomy course.19 Controls across the studies varied, but all were randomized controlled trials. Outcomes measured were similarly heterogeneous, ranging from 10- to 30-question multiple choice exams and practical exams requiring cadaveric identification of structures.

Medical Student or Surgery-naïve Procedural Learning

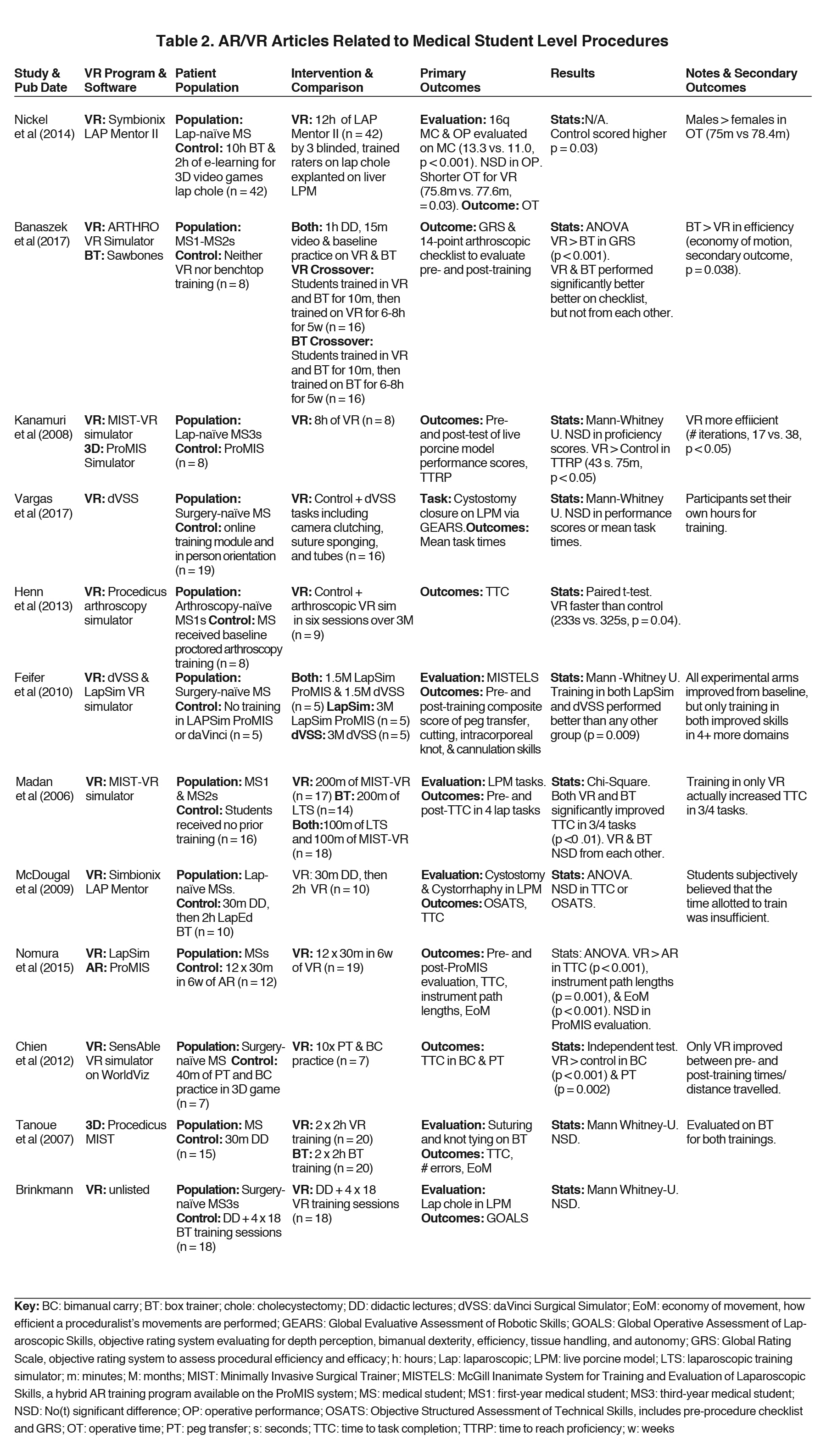

Twelve studies22-33 were identified that sought to evaluate AR/VR training vs. box training in improving procedural tasks in surgery-naïve medical students (Table 2). Box trainers are the current standard of laparoscopic training. They consist of an enclosed box with a minimum of 2 laparoscopic port sites for instrument entry, a camera that displays the inside of the box, and a variety of objects inside to train in procedural skills. Some of the most common tasks include peg transfer, in which trainees must use laparoscopic tools to pick up porous silicone objects impaled by vertical pegs and place them in a targeted area. Most of the studies found that AR/VR did not significantly differ from traditional learning methods. The most common AR/VR programs used include LAP Mentor (3D Systems; Valencia, California), Minimally Invasive Surgical Training-Virtual Reality (MIST-VR), and dVSS. Participant demographics varied from first-year medical students to surgery-naïve surgical interns. As with anatomy education, procedural learning control groups were highly variable. They consisted of box training, didactic lectures, online training modules, and 3D videos. Standardized outcome measures used included objective structured assessment of technical skill (OSATS), global rating scales (GRS), and various subcomponents such as time to task completion, errors committed, and economy of motion.

Discussion

Noninferiority with Standard of Practice for Learning and Teaching

The studies identified in this review suggest that AR/VR is a suitable surrogate for acquiring the visuospatial skills necessary to be proficient in learning anatomy and simple procedural tasks,15-36 topics with high relevancy for radiation oncology residency training and potentially ongoing maintenance of certification requirements. While the majority of U.S. medical schools use prosections, cadaveric dissections, and didactic lectures to teach anatomy, a standardized methodology does not exist; instead, anatomy curricula are created per the discretion of each medical school and accredited by the Accreditation Committee for Graduate Medical Education (ACGME). Interestingly, 2 out of 134 medical schools were able to maintain their accreditation even without traditional cadaveric dissections. This suggests that nontraditional means of producing functional anatomy curricula is practical and already in existence.37 This study specifically sought articles using medical students as participants to examine the largest possible benefit from AR/VR naïve training, and the results are promising. With traditional learning done through the necessary use of live porcine models or expensive cadavers, the medical education community can benefit AR/VR’s scalable and cost-effective benefits.

Kucuk et al and Nicholson et al showed that if the control group were taught using 2D lectures without cadaveric dissection, the AR/VR group performed significantly better.18,23 This suggests that the ability to create 3D anatomical representations are adequately learned through AR/VR training. Interestingly, Moro et al used a control group consisting of a tablet-based 3D representation of neuroanatomical structures, and none of the groups (either VR or AR) performed significantly better than the tablet group.19 All studies controlled for prior anatomy experience, and only 3 of the studies controlled for previous experience with AR/VR. Time spent with AR/VR supplementation varied significantly across all studies, from as short as 24 minutes to 12 hours. Peterson and his study fall in the latter group, and his data suggest that AR-supplemented training increased standardized scores, even against traditional cadaveric dissection.21

Outcomes measured amongst the procedural studies consisted of multiple choice exams and practical exams comprised of standardized scores for procedural effectiveness via time to task completion, errors made, and economy of motion. The results were heterogeneous. Time allotted for AR/VR training varied drastically, from 2 to 12 hours. Overall, VR training did not significantly differ from box trainer in terms of mean time to task completion, errors made, or economy of motion. Instead, they improved a participant’s procedural task abilities similarly to box trainers when allowed to train for equal amounts of time. Standard learning curves for procedural tasks are expected to have a high slope early on with eventual plateauing, indicative of diminishing returns based on time put in.38-41 However, determining the time to proficiency is critical in creating an effective educational course, an outcome not readily measured in these current studies. The advantage to a stereoscopic training environment is that it assists in visualizing a 3D world. However, all studies were tested in 2D laparoscopic view and were still found to be noninferior to laparoscopic box training. Most of the studies used live porcine models, although Tanoue et al and Chien et al tested their participants on box transfer.24,32

Heterogeneity of Results

The status of AR/VR research in healthcare is in its infancy. Unfortunately, this means that the studies available are single-center, industry-backed projects with small study populations and heterogeneous-measured outcomes. Even the definition of virtual reality remains ambiguous, as many nonstereoscopic 3D image-based studies from the last decade used it in their title. A need for formalized training procedures on AR/VR can eliminate this problem by standardizing the time required to reach proficiency in anatomy education and simple procedural tasks. Additionally, a gold standard for outcome measures based on a standardized time to proficiency needs to be established.

Radiation Oncology Integration

Understanding the representation of accurate 3D visualization of tumor volumes, treatment dose distributions,12 and radiation damage to healthy tissue on computed tomography (CT), MRI, ultrasound and/or positron emission tomography (PET)/CT is necessary for radiation oncologists who typically have no formalized radiology training. VR has already been used to help teach patients, residents, and radiation therapists about patient positioning using a projector-based virtual reality program.10 Pilot studies using AR have also been used to help guide the placement of brachytherapy needles.11 Moreover, intraoperative delivery of radiation treatment or precise positioning of permanent seeds, as well as outpatient HDR insertion techniques, all require technical expertise, which can be difficult to measure during residency and in medical practice. Standardization and practice with procedural techniques could potentially improve safety in high-risk but necessary procedures such as brachytherapy. As brachytherapy fellowships are typically few and rely on an apprenticeship training model, the democratization of high-quality patient care will be limited by the quantity of cases at high-volume cancer centers. As AR/VR is an incredibly versatile and scalable technology, training can be systematically improved and adjusted based on the current standards of practice, with the potential to measure individual proficiency. Corrective training and real-time peer review can then be possible. In addition, treatment can be simulated without causing any patient harm, providing a safe and effective method of training next-generation radiation oncologists and ensuring the ongoing competence of the existing practitioners. AR/VR technology is ready to be integrated into radiation oncology training programs with needed research into how best to optimize such an initial and ongoing approach to ensure competency.

Conclusion

As healthcare shifts with a focus on producing cost-effective practices, healthcare education can benefit from the scalable nature of AR/VR. All of the studies we reviewed demonstrated noninferiority to the current standard of practice regarding training in clinically naïve participants. For radiation oncology residents, this translates into a more immersive learning environment in a field that requires proficient visuospatial and technical abilities. Future integration opportunities may extend far beyond residency education and offer practicing radiation oncologists the AR/VR immersion capability for demonstrating procedural proficiency for ongoing maintenance of certification, ultimately enhancing patient safety and ensuring the highest standards in quality of care.

References

- Milgram PK, Fumio K, A taxonomy of mixed reality visual displays. IEICE Transactions on Information Systems. 1994;E77-D(12):15.

- Foerster RM, et al. Using the virtual reality device Oculus Rift for neuropsychological assessment of visual processing capabilities. Sci Rep. 2016;6:37016.

- Meli L, Pacchierotti C, Prattichizzo D. Experimental evaluation of magnified haptic feedback for robot-assisted needle insertion and palpation. Int J Med Robot. 2017.

- Barthel A, Trematerra D, Nasseri MA, et al. Haptic interface for robot-assisted ophthalmic surgery. Conf Proc IEEE Eng Med Biol Soc. 2015:4906-4909.

- Deshpande N, Chauhan M, Pacchierotti C, et al. Robot-assisted microsurgical forceps with haptic feedback for transoral laser microsurgery. Conf Proc IEEE Eng Med Biol Soc. 2016;5156-5159.

- Gou F, Chen H,cLi MC, et al. Submillisecond-response liquid crystal for high-resolution virtual reality displays. Opt Express. 2017;25(7):984-7997.

- Schulze JP, Schulze-Döbold C, Erginay A,cTadayoni R. Visualization of three-dimensional ultra-high resolution OCT in virtual reality. Stud Health Technol Inform. 2013;184:387-391.

- Hanly EJ, Marohn MR,cBachman SL et al. Multiservice laparoscopic surgical training using the daVinci surgical system. Am J Surg. 2004;187(2):309-315.

- Liss MA, Abdelshehid C,cQuach S, et al. Validation, correlation, and comparison of the da Vinci trainer and the daVinci surgical skills simulator using the Mimic software for urologic robotic surgical education. J Endourol. 2012;26(12):1629-1634.

- Boejen A, Grau C. Virtual reality in radiation therapy training. Surg Oncol. 2011;20(3):185-188.

- Krempien R, Hoppe H,cKahrs L, et al. Projector-based augmented reality for intuitive intraoperative guidance in image-guided 3D interstitial brachytherapy. Int J Radiat Oncol Biol Phys, 2008;70(3):944-952.

- Onizuka R, Araki F, Ohno T, et al. Accuracy of dose calculation algorithms for virtual heterogeneous phantoms and intensity-modulated radiation therapy in the head and neck. Radiol Phys Technol. 2016;9(1):77-87.

- Zaorsky NG, Hurwitz MD, Dicker AP, et al. Is robotic arm stereotactic body radiation therapy “virtual high dose ratebrachytherapy” for prostate cancer? An analysis of comparative effectiveness using published data [corrected]. Expert Rev Med Devices. 2015. 12(3):317-327.

- Sharma M, Williamson J, Siebers J. MO-A-137-02: Comparative efficacy of image-guided adaptive treatment strategies for prostate radiation therapy via virtual clinical trials. Med Phys. 2013;40(6Part23):387.

- Codd AM, Choudhury B. Virtual reality anatomy: is it comparable with traditional methods in the teaching of human forearm musculoskeletal anatomy? Anat Sci Educ. 2011;4(3):119-125.

- de Faria JW, Teixeira MJ, de Moura Sousa Júnior L, et al. Virtual and stereoscopic anatomy: when virtual reality meets medical education. J Neurosurg. 2016;125(5):1105-1111.

- Kockro RA, Amaxopoulou C, Killeen T et al. Stereoscopic neuroanatomy lectures using a three-dimensional virtual reality environment. Ann Anat. 2015; 201:91-98.

- Kucuk S, Kapakin S, Goktas Y. Learning anatomy via mobile augmented reality: Effects on achievement and cognitive load. Anat Sci Educ. 2016;9(5):411-421.

- Moro C, Štromberga Z, Raikos A, Stirling A. The effectiveness of virtual and augmented reality in health sciences and medical anatomy. Anat Sci Educ. 2017.

- Nicholson DT, Chalk C, Funnell WR, Daniel SJ. Can virtual reality improve anatomy education? A randomised controlled study of a computer-generated three-dimensional anatomical ear model. Med Educ. 2006. 40(11):1081-1087.

- Peterson DC, Mlynarczyk GS. Analysis of traditional versus three-dimensional augmented curriculum on anatomical learning outcome measures. Anat Sci Educ. 2016;9(6):529-536.

- Banaszek D, You D, Chang J, et al. Virtual reality compared with bench-top simulation in the acquisition of arthroscopic skill: a randomized controlled trial. J Bone Joint Surg Am. 2017;99(7):e34.

- Brinkmann C, Fritz M, Pankratius U et al. Box- or virtual-reality trainer: which tool results in better transfer of laparoscopic basic skills? A prospective randomized trial. J Surg Educ. 2017.

- Chien JH, Suh IH, Park SH, et al. Enhancing fundamental robot-assisted surgical proficiency by using a portable virtual simulator. Surg Innov. 2013;20(2):198-203.

- Feifer A, Al-Ammari A, Kovac E, et al. Randomized controlled trial of virtual reality and hybrid simulation for robotic surgical training. BJU Int. 2011;108(10):1652-6;discussion1657.

- Henn RF 3rd, Shah N, Warner JJ, Gomoll AH. Shoulder arthroscopy simulator training improves shoulder arthroscopy performance in a cadaveric model. Arthroscopy. 2013;29(6):982-985.

- Kanumuri P, Ganai S, Wohaibi EM, et al. Virtual reality and computer-enhanced training devices equally improve laparoscopic surgical skill in novices. JSLS. 2008;12(3):219-226.

- Madan AK, Frantzides CT, Sasso LM. Laparoscopic baseline ability assessment by virtual reality. J Laparoendosc Adv Surg Tech A. 2005;15(1):13-17.

- McDougall EM, Kolla SB, Santos RT, et al. Preliminary study of virtual reality and model simulation for learning laparoscopic suturing skills. J Urol. 2009;182(3):1018-1025.

- Nickel F, Brzoska JA, Gondan M, et al. Virtual reality training versus blended learning of laparoscopic cholecystectomy: a randomized controlled trial with laparoscopic novices. Medicine (Baltimore), 2015;94(20):e764.

- Nomura T, Mamada Y, Nakamura Y, et al. Laparoscopic skill improvement after virtual reality simulator training in medical students as assessed by augmented reality simulator. Asian J Endosc Surg. 2015;8(4):408-412.

- Tanoue K, Ieiri S, Konishi K, et al. Effectiveness of endoscopic surgery training for medical students using a virtual reality simulator versus a box trainer: a randomized controlled trial. Surg Endosc. 2008; 22(4):985-990.

- Vargas MV, Moawad G, Denny K, et al. Transferability of virtual reality, simulation-based, robotic suturing skills to a live porcine model in novice surgeons: a single-blind randomized controlled trial. J Minim Invasive Gynecol. 2017;24(3):420-425.

- Trelease RB, Nieder GL. Transforming clinical imaging and 3D data for virtual reality learning objects: HTML5 and mobile devices implementation. Anat Sci Educ. 2013;6(4):263-270.

- Matzke J, Ziegler C, Martin K, et al. Usefulness of virtual reality in assessment of medical student laparoscopic skill. J Surg Res. 2017;211:191-195.

- Nickel F, et al. Virtual reality does not meet expectations in a pilot study on multimodal laparoscopic surgery training. World J Surg. 2013;37(5) :965-973.

- Mintz ML. Resources for learning anatomy. AAMC Curriculum Inventory in Context. 2016;3(12).

- Padin EM, Santos RS, Fernández SG, et al. Impact of three-dimensional laparoscopy in a bariatric surgery program: influence in the learning curve. Obes Surg. 2017.

- Kyriazis I, Özsoy M,& Kallidonis P, et al. Integrating three-dimensional vision in laparoscopy: the learning curve of an expert. J Endourol. 2015;29(6):657-660.

- Suguita FY, Essu FF, Oliveira LT, et al. Learning curve takes 65 repetitions of totally extraperitoneal laparoscopy on inguinal hernias for reduction of operating time and complications. Surg Endosc. 2017.

- Cologne KG, Zehetner J, Liwanag L, et al. Three-dimensional laparoscopy: does improved visualization decrease the learning curve among trainees in advanced procedures? Surg Laparosc Endosc Percutan Tech. 2015;25(4):321-323.

Citation

Jin W, Birckhead B, Perez B, Hoffe S. Augmented and virtual reality: Exploring a future role in radiation oncology education and training. Appl Rad Oncol. 2017;(4):13-20.

December 14, 2017